Schoening, T., Köser, K., & Greinert, J. (2018). An acquisition, curation and management workflow for sustainable, terabyte-scale marine image analysis. Scientific data, 5, 180181. DOI: 10.1038

When you take a picture with your phone, it gets stored in a database. Tech companies call this “cloud storage.” If you are an iPhone aficionado, those images end up in iCloud. If you are an Android user, they likely show up in Google Photos. All those images are stored with metadata identifying things like when and where the photo was taken. All this information can be used to archive, sort, and display your pictures in a meaningful way.

In essence, the image data you are capturing gets shipped off and stored in a remote location. The whole process has been standardized and streamlined to the point that billions of smartphone users around the world can quickly store and access their information. The pipeline feels easy and simple to everyday users. Scientists are entering an era where they need a similar system for their data.

Modern oceanographers are producing loads of image data . From biologists to climatologists to physical oceanographers to geophysicists, everyone is taking pictures. And lots of them – it is not at all uncommon for scientists to return from sea with 10s to 100s of terabytes of data from a single cruise. But most of this data sits idle, never analyzed, never shared.

Data management is a distinctly unsexy topic. Everyone wants to get right from their adventures at sea to discussing their exciting new discoveries. But without rigorous techniques to store and maintain the vast amounts of data being collected, much of it will remain unanalyzed. Part of the reason so much oceanographic data is underutilized is that scientists often do not have a clear methodology for dealing with it. Recognizing these challenges, Timm Schoening and his colleagues at the Helmholtz-Center for Ocean Research and the Christian-Albrechts University in Kiel, Germany sought to provide a roadmap.

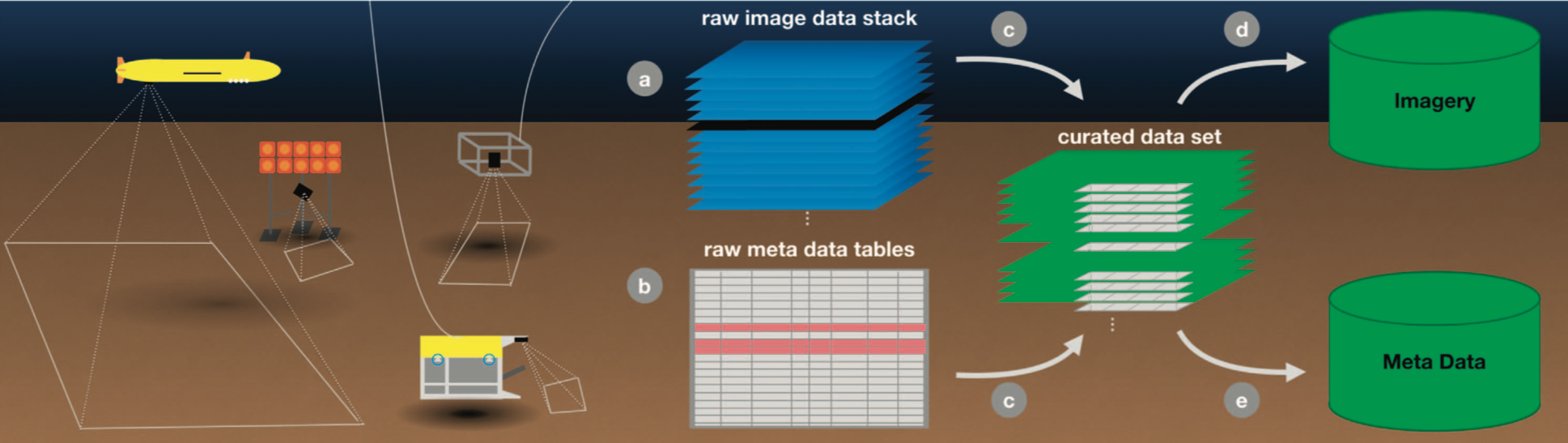

In their recent paper, the team laid out a set of best practices to ensure that data gets captured and stored as efficiently as possible. To illustrate their proposed work flow, the group layout the decision-making process for how they collected, stored, and distributed a terabyte-scale image set observing a simulated deep-sea mining site in the equatorial Pacific Ocean.

Broadly speaking, the group considered three main questions as they designed their fieldwork: data acquisition, curation, and long-term management. Each stage involved a lot of nitty gritty detail and difficult decisions about what exactly the goals of the project were. At every step, Schoening emphasizes the need to think about the future – what is the data going to be used for in the short term? How about the long term? What sort of information should be held onto? Where will it live? How long does it need to live there?

To collect their data, Schoening and his group outfitted an Autonomous Underwater Vehicle (AUV) with a camera system to take pictures of the bottom. To facilitate future studies, each image was stored with a bunch of metadata including navigation information and environmental parameters – all puzzle pieces that might come in handy later. Over 21 dives, the system collected nearly 500,000 images for a total of about 30 terabytes of data. Once safely ashore, the data was cleaned and analyzed by hand before being stored in three separate locations. Finally, after some preliminary analysis, the group made the data publicly available on PANGEA, an Open Access earth sciences data repository maintained by consortium of German institutions.

A lot of the guidelines Schoening proposes are good common sense. But that does not make them easy to implement. There is an emphasis in oceanography on data collection and the community is just now beginning to deal with how to manage the huge influx of information. Coming up with long-term, widely accepted solutions to these simple but vexing questions is a challenge. Without answers, untold discoveries, about all manner of phenomena, will sit unobserved in dusty hard drives on forgotten bookshelves.

Eric is a PhD student at the Scripps Institution of Oceanography. His research in the Jaffe Laboratory for Underwater Imaging focuses on developing methods to quantitatively label image data coming from the Scripps Plankton Camera System. When not science-ing, Eric can be found surfing, canoeing, or trying to learn how to cook.